VisText: Teaching AI to Write Better Accessible Chart Captions

Author: Massachusetts Institute of Technology

Published: 2023/07/02 - Updated: 2024/10/02

Publication Type: Reports & Proceedings

Contents: Synopsis - Definition - Introduction - Main - Related Publications

Synopsis: The article discusses the development of VisText, a new dataset created by MIT researchers to improve automatic chart captioning systems. This innovation is significant because it addresses a critical accessibility issue for people with visual disabilities, who often rely solely on chart captions to understand visual data. VisText enables the creation of more accurate, detailed, and semantically rich captions that describe complex trends and patterns in charts, which current auto captioning systems struggle to articulate. By providing this dataset as a tool for researchers, VisText has the potential to enhance online chart accessibility, improve comprehension for all users, and reduce the labor-intensive process of writing effective captions manually.

- Topic Definition: VisText

VisText is a benchmark dataset of over 12,441 charts and semantically rich captions! In the VisText dataset, each chart is represented as its rasterized image, scene graph specification, and underlying datatable. Each chart is paired with a synthetic L1 caption that describes the chart's elemental and ecoded properties and a human-generated L2/L3 caption that describes trends and statistics about the chart.

Introduction

"VisText: A Benchmark for Semantically Rich Chart Captioning" - Massachusetts Institute of Technology.

Chart captions that explain complex trends and patterns are important for improving a reader's ability to comprehend and retain the data being presented. And for people with visual disabilities, the information in a caption often provides their only means of understanding the chart.

Main Content

1 - Researchers Teach Artificial Intelligence (AI) to Write Accessible Chart Captions

Writing effective, detailed captions is a labor-intensive process. While autocaptioning techniques can alleviate this burden, they often struggle to describe cognitive features that provide additional context.

To help people author high-quality chart captions, MIT researchers have developed a dataset to improve automatic captioning systems. Using this tool, researchers could teach a machine-learning model to vary the level of complexity and type of content included in a chart caption based on the needs of users.

The MIT researchers found that machine-learning models trained for autocaptioning with their dataset consistently generated captions that were precise, semantically rich, and described data trends and complex patterns. Quantitative and qualitative analyses revealed that their models captioned charts more effectively than other autocaptioning systems.

The team's goal is to provide the dataset, called VisText, as a tool researchers can use as they work on the thorny problem of chart autocaptioning. These automatic systems could help provide captions for uncaptioned online charts and improve accessibility for people with visual disabilities, says co-lead author Angie Boggust, a graduate student in electrical engineering and computer science at MIT and member of the Visualization Group in the Computer Science and Artificial Intelligence Laboratory (CSAIL).

"We've tried to embed a lot of human values into our dataset so that when we and other researchers are building automatic chart-captioning systems, we don't end up with models that aren't what people want or need," she says.

Boggust is joined on the paper by co-lead author and fellow graduate student Benny J. Tang and senior author Arvind Satyanarayan, associate professor of computer science at MIT who leads the Visualization Group in CSAIL. The research will be presented at the Annual Meeting of the Association for Computational Linguistics.

Human Centered Analysis

The researchers were inspired to develop VisText from prior work in the Visualization Group that explored what makes a good chart caption. In that study, researchers found that sighted users and blind or low-vision users had different preferences for the complexity of semantic content in a caption.

The group wanted to bring that human-centered analysis into autocaptioning research. To do that, they developed VisText, a dataset of charts and associated captions that could be used to train machine-learning models to generate accurate, semantically rich, customizable captions.

Developing effective autocaptioning systems is no easy task. Existing machine-learning methods often try to caption charts the way they would an image, but people and models interpret natural images differently from how we read charts. Other techniques skip the visual content entirely and caption a chart using its underlying data table. However, such data tables are often not available after charts are published.

Given the shortfalls of using images and data tables, VisText also represents charts as scene graphs. Scene graphs, which can be extracted from a chart image, contain all the chart data but also include additional image context.

"A scene graph is like the best of both worlds - it contains almost all the information present in an image while being easier to extract from images than data tables. As it's also text, we can leverage advances in modern large language models for captioning," Tang explains.

They compiled a dataset that contains more than 12,000 charts - each represented as a data table, image, and scene graph - as well as associated captions. Each chart has two separate captions: a low-level caption that describes the chart's construction (like its axis ranges) and a higher-level caption that describes statistics, relationships in the data, and complex trends.

The researchers generated low-level captions using an automated system and crowdsourced higher-level captions from human workers.

"Our captions were informed by two key pieces of prior research: existing guidelines on accessible descriptions of visual media and a conceptual model from our group for categorizing semantic content. This ensured that our captions featured important low-level chart elements like axes, scales, and units for readers with visual disabilities, while retaining human variability in how captions can be written," says Tang.

Translating Charts

Once they had gathered chart images and captions, the researchers used VisText to train five machine-learning models for autocaptioning. They wanted to see how each representation - image, data table, and scene graph - and combinations of the representations affected the quality of the caption.

"You can think about a chart captioning model like a model for language translation. But instead of saying, translate this German text to English, we are saying translate this 'chart language' to English," Boggust says.

Their results showed that models trained with scene graphs performed as well or better than those trained using data tables. Since scene graphs are easier to extract from existing charts, the researchers argue that they might be a more useful representation.

They also trained models with low-level and high-level captions separately. This technique, known as semantic prefix tuning, enabled them to teach the model to vary the complexity of the caption's content.

In addition, they conducted a qualitative examination of captions produced by their best-performing method and categorized six types of common errors. For instance, a directional error occurs if a model says a trend is decreasing when it is actually increasing.

This fine-grained, robust qualitative evaluation was important for understanding how the model was making its errors. For example, using quantitative methods, a directional error might incur the same penalty as a repetition error, where the model repeats the same word or phrase. But a directional error could be more misleading to a user than a repetition error. The qualitative analysis helped them understand these types of subtleties, Boggust says.

These sorts of errors also expose limitations of current models and raise ethical considerations that researchers must consider as they work to develop autocaptioning systems, she adds.

Generative machine-learning models, such as those that power ChatGPT, have been shown to hallucinate or give incorrect information that can be misleading. While there is a clear benefit to using these models for autocaptioning existing charts, it could lead to the spread of misinformation if charts are captioned incorrectly.

"Maybe this means that we don't just caption everything in sight with AI. Instead, perhaps we provide these autocaptioning systems as authorship tools for people to edit. It is important to think about these ethical implications throughout the research process, not just at the end when we have a model to deploy," she says.

Boggust, Tang, and their colleagues want to continue optimizing the models to reduce some common errors. They also want to expand the VisText dataset to include more charts, and more complex charts, such as those with stacked bars or multiple lines. And they would also like to gain insights into what these autocaptioning models are actually learning about chart data.

This research was supported, in part, by a Google Research Scholar Award, the National Science Foundation, the MLA@CSAIL Initiative, and the United States Air Force Research Laboratory.

2 - Blind and Sighted Readers Have Sharply Different Takes on What Content Is Most Useful to Include in a Chart Caption

By Adam Zewe, Massachusetts Institute of Technology (October 12, 2021)

In the early days of the COVID-19 pandemic, the Centers for Disease Control and Prevention produced a simple chart to illustrate how measures like mask wearing and social distancing could "flatten the curve" and reduce the peak of infections.

The chart was amplified by news sites and shared on social media platforms, but it often lacked a corresponding text description to make it accessible for blind individuals who use a screen reader to navigate the web, shutting out many of the 253 million people worldwide who have visual disabilities.

This alternative text is often missing from online charts, and even when it is included, it is frequently uninformative or even incorrect, according to qualitative data gathered by scientists at MIT.

These researchers conducted a study with blind and sighted readers to determine which text is useful to include in a chart description, which text is not, and why. Ultimately, they found that captions for blind readers should focus on the overall trends and statistics in the chart, not its design elements or higher-level insights.

They also created a conceptual model that can be used to evaluate a chart description, whether the text was generated automatically by software or manually by a human author. Their work could help journalists, academics, and communicators create descriptions that are more effective for blind individuals and guide researchers as they develop better tools to automatically generate captions.

"Ninety-nine-point-nine percent of images on X lack any kind of description-and that is not hyperbole, that is the actual statistic," says Alan Lundgard, a graduate student in the Computer Science and Artificial Intelligence Laboratory (CSAIL) and lead author of the paper. "Having people manually author those descriptions seems to be difficult for a variety of reasons. Perhaps semiautonomous tools could help with that. But it is crucial to do this preliminary participatory design work to figure out what is the target for these tools, so we are not generating content that is either not useful to its intended audience or, in the worst case, erroneous."

Lundgard wrote the paper with senior author Arvind Satyanarayan, an assistant professor of computer science who leads the Visualization Group in CSAIL. The research will be presented at the Institute of Electrical and Electronics Engineers Visualization Conference in October.

Evaluating Visualizations

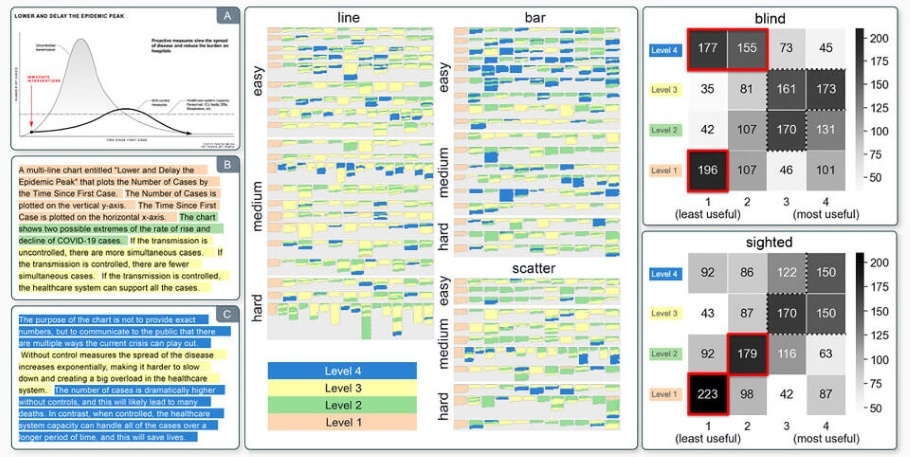

To develop the conceptual model, the researchers planned to begin by studying graphs featured by popular online publications such as FiveThirtyEight and NY Times, but they ran into a problem-those charts mostly lacked any textual descriptions. So instead, they collected descriptions for these charts from graduate students in an MIT data visualization class and through an online survey, then grouped the captions into four categories.

- Level 1 descriptions focus on the elements of the chart, such as its title, legend, and colors.

- Level 2 descriptions describe statistical content, like the minimum, maximum, or correlations.

- Level 3 descriptions cover perceptual interpretations of the data, like complex trends or clusters.

- Level 4 descriptions include subjective interpretations that go beyond the data and draw on the author's knowledge.

In a study with blind and sighted readers, the researchers presented visualizations with descriptions at different levels and asked participants to rate how useful they were. While both groups agreed that level 1 content on its own was not very helpful, sighted readers gave level 4 content the highest marks while blind readers ranked that content among the least useful.

Survey results revealed that a majority of blind readers were emphatic that descriptions should not contain an author's editorialization, but rather stick to straight facts about the data. On the other hand, most sighted readers preferred a description that told a story about the data.

"For me, a surprising finding about the lack of utility for the highest-level content is that it ties very closely to feelings about agency and control as a disabled person. In our research, blind readers specifically didn't want the descriptions to tell them what to think about the data. They want the data to be accessible in a way that allows them to interpret it for themselves, and they want to have the agency to do that interpretation," Lundgard says.

A More Inclusive Future

This work could have implications as data scientists continue to develop and refine machine learning methods for autogenerating captions and alternative text.

"We are not able to do it yet, but it is not inconceivable to imagine that in the future we would be able to automate the creation of some of this higher-level content and build models that target level 2 or level 3 in our framework. And now we know what the research questions are. If we want to produce these automated captions, what should those captions say? We are able to be a bit more directed in our future research because we have these four levels," Satyanarayan says.

In the future, the four-level framework could also help researchers develop machine learning models that can automatically suggest effective visualizations as part of the data analysis process, or models that can extract the most useful information from a chart.

This research could also inform future work in Satyanarayan's group that seeks to make interactive visualizations more accessible for blind readers who use a screen reader to access and interpret the information.

"The question of how to ensure that charts and graphs are accessible to screen reader users is both a socially important equity issue and a challenge that can advance the state-of-the-art in AI," says Meredith Ringel Morris, director and principal scientist of the People + AI Research team at Google Research, who was not involved with this study. "By introducing a framework for conceptualizing natural language descriptions of information graphics that is grounded in end-user needs, this work helps ensure that future AI researchers will focus their efforts on problems aligned with end-users' values."

Morris adds:

"Rich natural-language descriptions of data graphics will not only expand access to critical information for people who are blind, but will also benefit a much wider audience as eyes-free interactions via smart speakers, chatbots, and other AI-powered agents become increasingly commonplace."

Attribution/Source(s): This quality-reviewed publication was selected for publishing by the editors of Disabled World (DW) due to its relevance to the disability community. Originally authored by Massachusetts Institute of Technology and published on 2023/07/02, this content may have been edited for style, clarity, or brevity.